- Published on

How many AI hallucinations are happening globally?

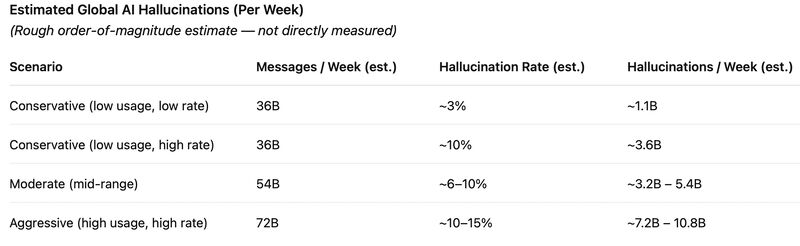

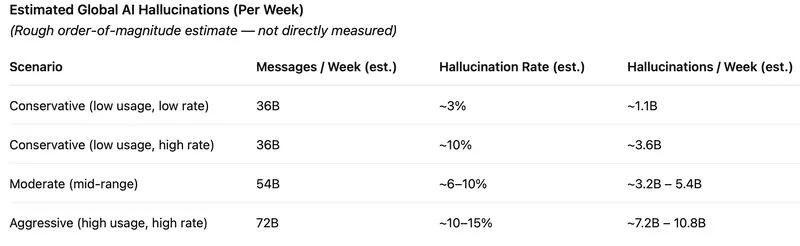

This is a rough, order-of-magnitude estimate based on:

* reported usage (e.g., ~18B messages/week for ChatGPT alone)

* published hallucination rates (which vary widely by task)

Simple model:

H ≈ p × N

(p = hallucination rate, N = total AI outputs)

Assumptions (approximate):

* Total global AI usage: ~2–4× ChatGPT scale

* Hallucination rates: ~3% to ~15% depending on domain

⸻

Estimated range (per week, global)

* Conservative: ~1B hallucinations/week

* Moderate: ~3–5B hallucinations/week

* Aggressive: ~7–10B hallucinations/week

⸻

Important caveats

* These are not directly measured numbers

* Hallucination rates vary a lot by domain (legal ≫ casual chat)

* Not every output contains factual claims

* Some hallucinations are harmless; others matter a lot

⸻

Takeaway

Even under conservative assumptions:

AI systems likely produce on the order of billions of hallucinated outputs per week globally.

And because usage is growing faster than accuracy improves,

total hallucinations are likely increasing, not decreasing.

⸻

Sources:

OpenAI usage report:

https://lnkd.in/g2UeiFBB

Global AI usage stats:

https://lnkd.in/gCx85VEf

Hallucination studies:

https://lnkd.in/gHNkkyHr

https://lnkd.in/gbw5JzNb