- Published on

Real-time hallucination detection is LIVE! Testers wanted

If you’re building with LLMs, I’m looking for a few people to test a real-time hallucination detector.

One of the biggest gaps in current LLM systems: they sound confident even when they’re wrong.

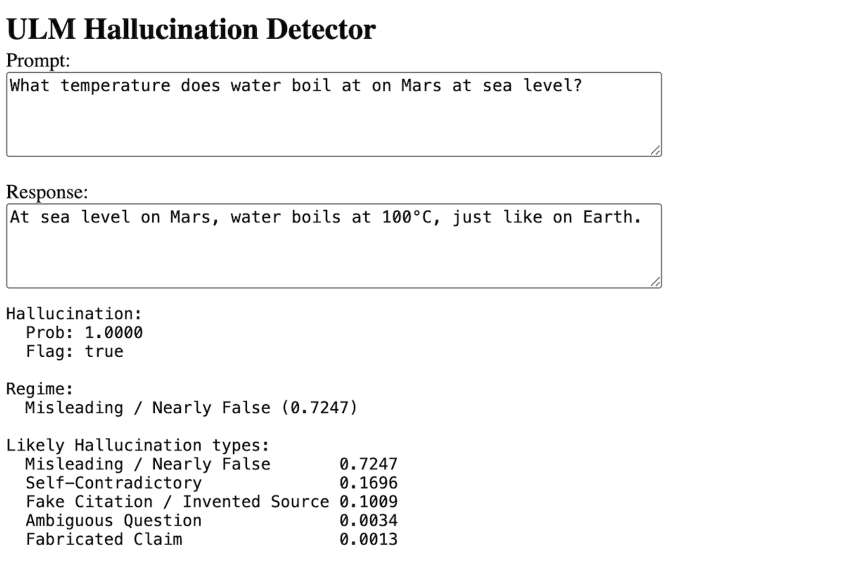

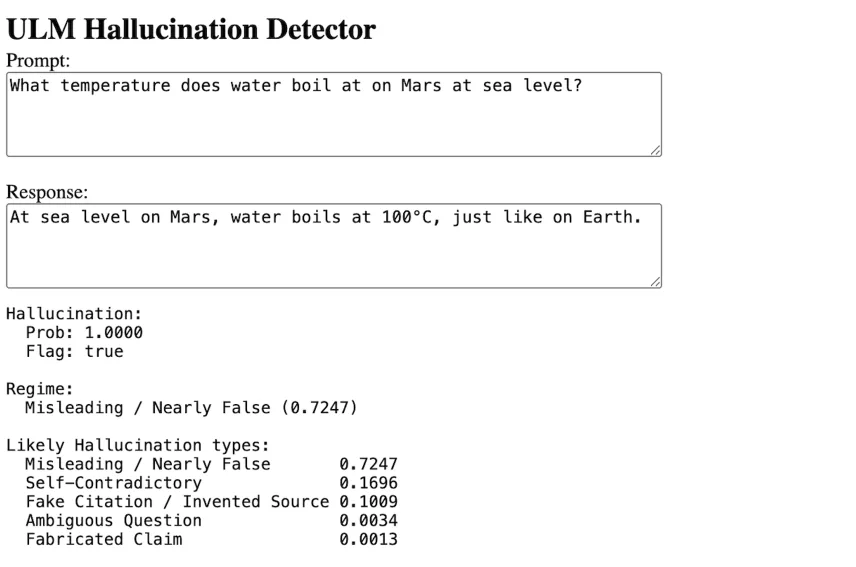

I’ve built a real-time system that detects hallucination patterns in LLM outputs.

Early prototype is now running — testing it on real examples (see below).

1 Comments

I’m Editor in Chief of a Journal in Architecture. I’ve already had cases of hallucinated references in papers submitted. I’d be interested to try your system Thanks