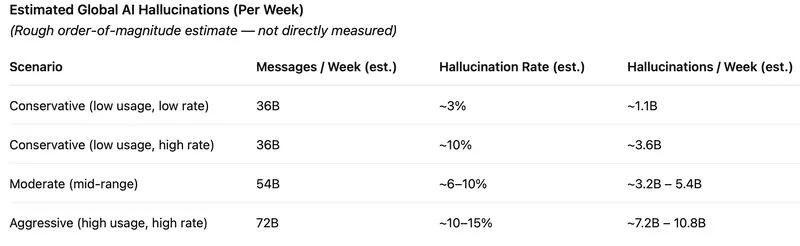

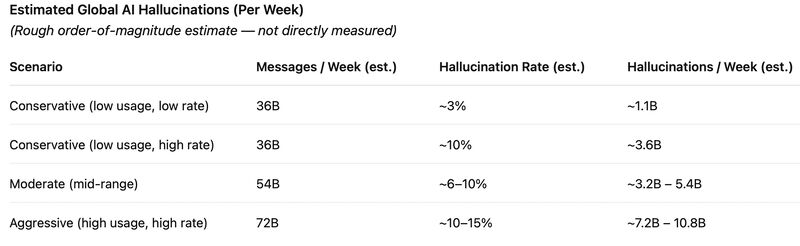

LLMs are already producing billions of hallucinated outputs per week.

Not because they’re broken—

but because they’re designed to sound confident, even when they’re wrong.

At scale, that’s not a small bug.

It’s a systemic issue.

There’s a useful lens from Ecological Economics:

It defines four forms of capital:

• Natural (environmental)

• Human (skills, judgment, purpose)

• Social (trust, institutions)

• Physical (machines, infrastructure)

AI systems are powerful physical capital, powered by natural capital.

But unreliable outputs degrade the rest:

* Human capital → overreliance on incorrect answers

* Social capital → erosion of trust

* Physical capital → wasted compute and bad decisions

* Natural capital → unnecessary energy use

So the real question isn’t:

“How much can we automate?”

It’s:

“How do we use AI reliably at scale?”

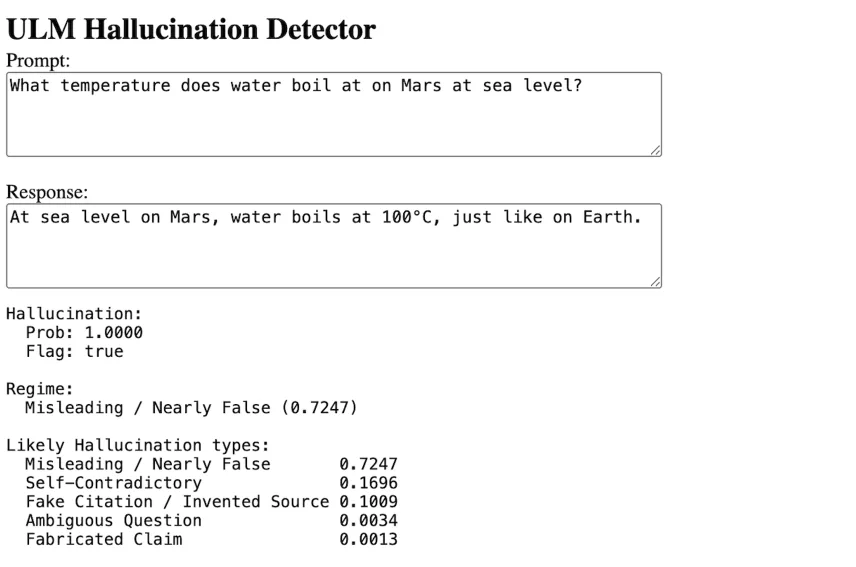

At Komplexity AI, we’re building a real-time hallucination detector for LLM outputs.

In internal testing, our system achieves >0.90 AUC, with real-time inference on a single GPU.

The goal is simple:

Give every AI response a reliability signal at inference time.

* High confidence → proceed

* Low confidence → route, verify, or intervene

If AI is becoming a new layer of labor,

then reliability isn’t optional—it’s foundational.